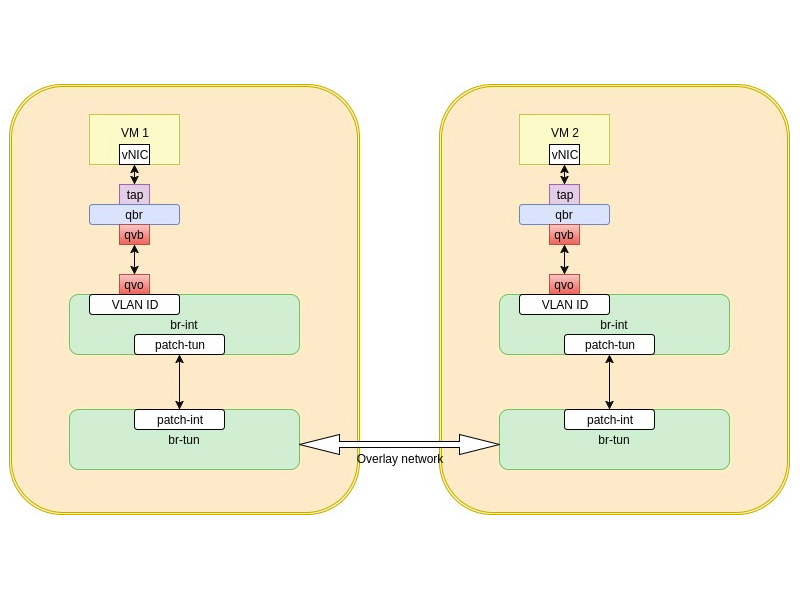

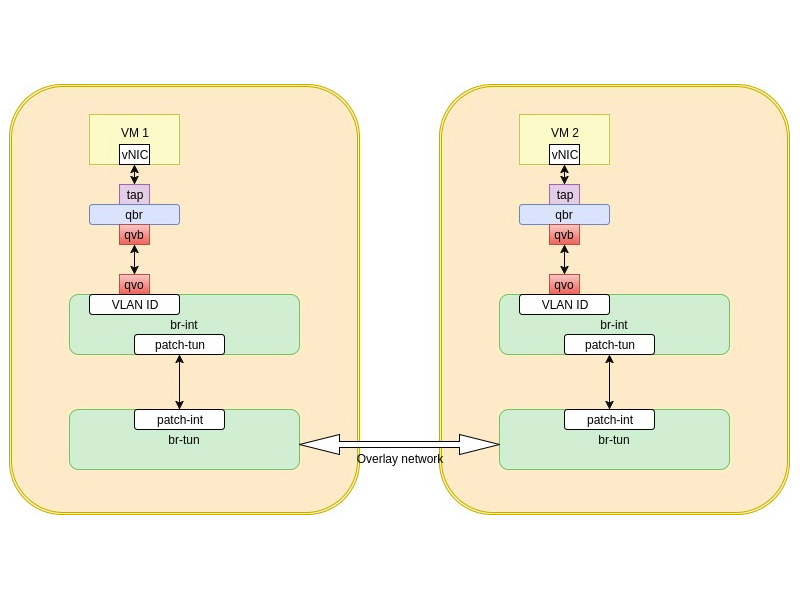

VM to VM communication, same network, different compute hosts

In the last post, we spoke about VM to VM communication when they belong to the same network and happen to get deployed to the same host. This is a good scenario, but in a big openstack deployment, it’s unlikely that all your VMs belonging to the same network will end up on the same compute host. The more likely scenario is that VMs will be deployed on multiple compute hosts.

When the VMs lived on the same host, unicast traffic got handled using br-int. But we have to remember that br-int is local to the compute host. so when VMs get deployed on multiple compute hosts another technique needs to be used. Traffic will have to flow between the compute hosts over an overlay network. br-tun is responsible for the overlay network establishing and handling. The overlay network can be VXLAN or GRE depending on your choice

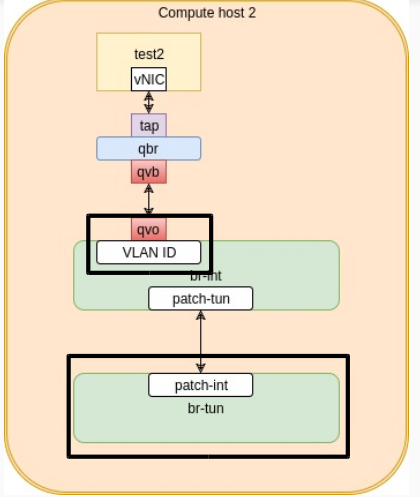

Let’s look at how this looks like

When VM1 wants to send unicast traffic to VM2, traffi will have to flow down from vNIC to tap to qbr to qvb-qvo veth pair. This time it get VLAN tagged on the br-int but have to exit through the patch interface to the br-tun bridge. The br-tun bridge strips the VLAN ID out of the traffic and pushes the traffic to every compute host in the environement over a dedicated lane, called VXLAN tunnel ID. You can think of the VXLAN tunnel ID as a way to seggregate traffic from different networks when it enters the overlay network (VXLAN in our case)

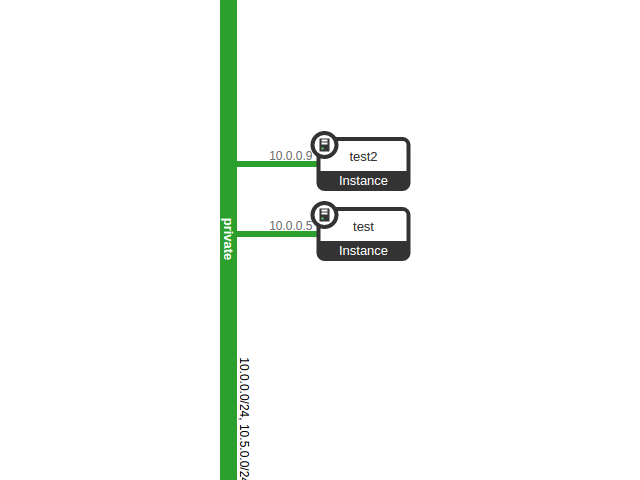

Let’s look again into the same example of VMs test and test2, but this time they are on different compute hosts. Their logical diagram remains unchanged

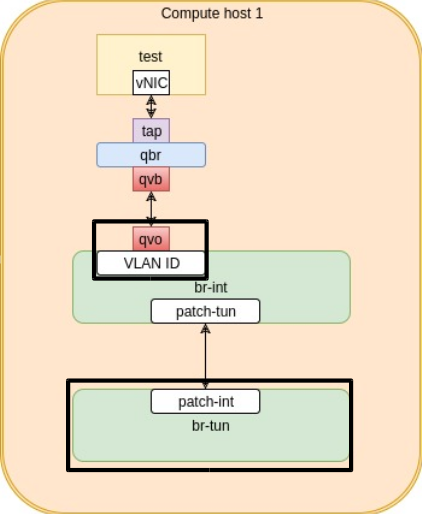

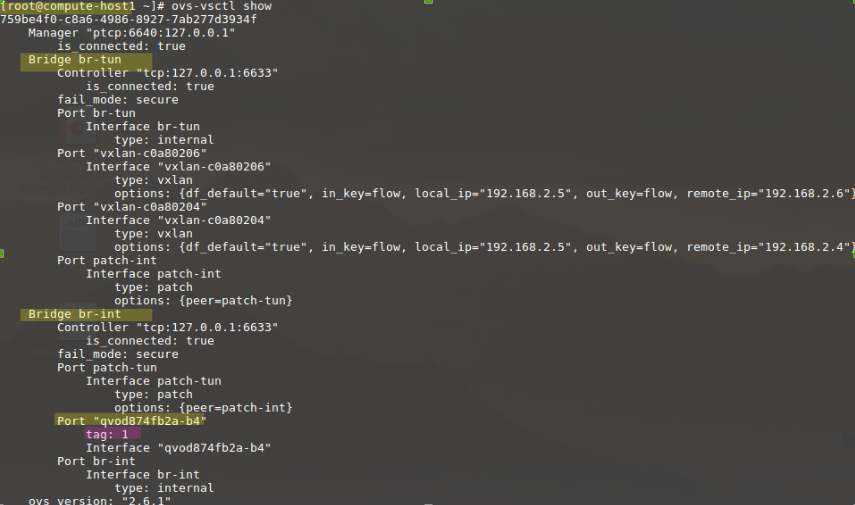

Now let’s look at Compute host 1 that hosts the “test” VM.

We will focus on the qvo portion and the br-tun bridge since we already know how the traffic will flow until it reaches the br-int. So let’s see the VLAN tag for the traffic from the “test” VM.

As you can see , from the br-int definition, the tag of this traffic is VLAN ID 1

We also know that this traffic will have to exit the compute host via br-tun. So, let’s look at the br-tun openflow rules. We’r expecting to see the VLAN tag being stripped out and the VXLAN Tunnel ID to be added before traffic is sent over the overlay network

![]()

As you can see, outbound traffic with VLAN tag 1 gets its VLAN ID stripped and the traffic gets loaded over 0x39 tunnel ID. So we know that the traffic will be sent to all compute hosts in the environment (and network hosts as well) over the tunnel ID 0x39

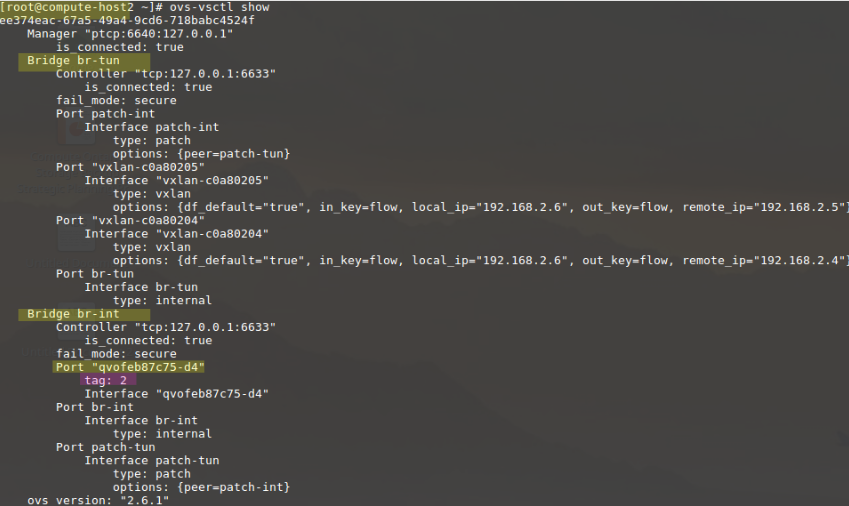

Let’s see how compute host 2, that hosts “test2” vm looks like

Let’s look at the qvo portion and the br-tun definition

We see that the VLAN tag for qvo interface is 2, which is different from compute host 1. This is expected. Since the two VMs live on different hosts, there is no guarantee that their qvo interfaces will have a common VLAN ID.

So let’s look at the br-tun flow rules

![]()

As you can see, incoming traffic on VXLAN tunnel ID 0x39 gets VLAN tagged with VLAN tag 2 and gets sent to the br-int. In other words a path is opened for it to reach the qvo of “test2” instance. While if the traffic is outbound from “test2” VM, i.e. with VLAN tag 2, its VLAN tag gets stripped and the traffic gets sent over the overlay network with VXLAN tunnel ID 0x39.

So the basic idea is that traffic gets VLAN tagged on the br-int, and if it is destined to leave the host it get sent through the br-tun. The br-tun deos a VLAN ID to VXLAN tunnel ID translation. Where a dedicated VXLAN tunnel ID is given for every tenant network in your environment.

One point to mention here is that br-tun is smart, so instead of sending the traffic over the overlay network to every compute host and network host in the environment, it slowly learns what sits where. In other words the next time the “test” instance will send traffic to “test2” instance, traffic will be sent only to compute host 2, not to all compute hosts in the environment. This is done by adding an openflow rule to the br-tun flows for the MAC address of “test2” instance interface

Leave a Reply